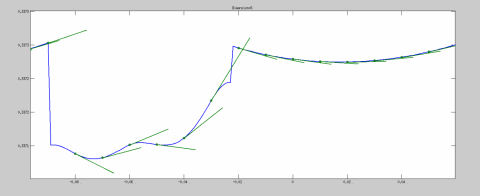

What I mean is what do I should use instead of hand calculated derivative in this line: slop=3*A.*(Location^2)+2*B.*Location+C Legend('Graph of the function','Tangent Line') What I am doing now is getting the tangent line of the above example and I am doing like this and it works: x = linspace(0,10,1000) ī=(A.*Location.^3)+(B.*Location.^2)+(C.*Location)+D

#Matlab derivative how to#

Of course I can do this manually, but I really like to know how to do it with matlab itself. For example I want derivative of each point for the example above for n = linspace(0,10,1000)

#Matlab derivative code#

I want to get this in matlab, but I can't figure out how :(įor example I tried this code but I get stupid result (maybe I am the one who should be blamed!): > x = Īlso I Want to know how yo do this for range of numbers. If you are going to use numerical derivatives a lot of times at different points, it becomes interesting to construct a Chebyshev approximation.In real life it is piece of cake, but how you get derivative of a quadratic or cubic function in matlab?įor example, A*x^3 + B*x^2 + C*x + D will be 3*Ax^2 + 2*b*x + C But those methods typically compute f quite a few times, this may or not be what you want if your function is complex. Richardson extrapolation is a good start for smooth functions.

#Matlab derivative free#

If this is too imprecise, feel free to ask for more methods, there are a lot of tricks to get better accuracy. In short: too small a h => roundoff error, too large a h => truncation error.įor the second order formula, same computation yields h ~ (6 * epsilon_f / f''')^(1/3)Īnd a fractional accuracy of (epsilon_f)^(2/3) for the derivative (which is typically one or two significant figures better than the first order formula, assuming double precision). half the significant figures are correct. For the optimal choice of h, the relative accuracy to which the derivative is known is sqrt(epsilon_f), ie. When in doubt, take h ~ sqrt(epsilon) where epsilon = machine accuracy. Which has to be estimated from your function. Plugging everything together and optimizing in h yields h ~ sqrt(2 * epsilon_f * f / f'') So you want h which minimizes e1(h) + e2(h). For more complicated ones, it can be worse than that by orders of magnitude. For simple functions, epsilon_f can be taken as the machine epsilon. This has to be assessed from your problem. Where epsilon_f is the relative precision in the computation of f(x) (or f(x + h), which is close). It can be estimated roughly as e2(h) ~ epsilon_f |f(x) / h|

The roundoff error comes from the fact that f(x + h) and f(x) are close to each other. The truncation error is because the formula is not exact: (f(x + h) - f(x)) / h = f'(x) + e1(h) The error in (*) has two parts: the truncation error and the roundoff error. If you are using a language like C, you must take care to define h or x as volatile for that computation not to be optimized away. So, first step, fix a candidate h (I'll show you in a minute how to chose it), and take as h for your computation the quantity h' = (x + h) - x. So any error on h is likely to have a huge impact on the result. Indeed, f(x + h) and f(x) are close to each other (say 2.0456 and 2.0467), and when you substract them, you lose a lot of significant figures (here it is 0.0011, which has 3 significant figures less than x). The very first observation is that you must make sure that (x + h) - x = h, otherwise you get huge errors. I'll take the second formula (first order) as the analysis is simpler. If it is only once differentiable, use f'(x) = (f(x + h) - f(x)) / h (*) If your function is known to be twice differentiable, use f'(x) = (f(x + h) - f(x - h)) / 2h